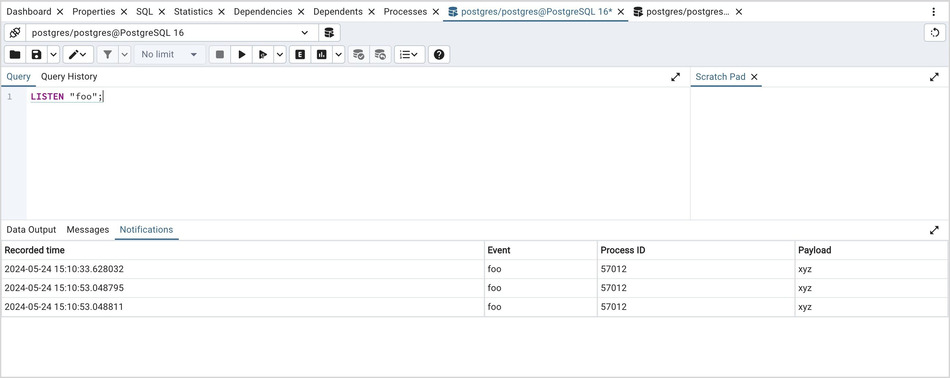

Now run the following query in pgAdmin4: SELECT * FROM world_population Īfter executing the above query, click on the pointed button. Open the query tool by right-clicking on the database. Using pgAdmin4, create a graphical representation of the data. Hurrahh!!! We’ve successfully written the ETL pipeline, which transformed the scraped data from the website and load data into the PostgreSQL database. Now, open pgAdmin, and you’ll see the scraped data in your database. Now re-run the spider by using the following command: scrapy crawl worldpopulation You can read about the steps from their documentation.Īfter establishing the connection, we create a table with column names and insert the values. We’re using the psycopg2 library to create a connection with PostgreSQL. ("Transfering Data to postgres Database") # CONNECTING SPIDER WITH THE POSTGRES DATABASEĬREATE TABLE IF NOT EXISTS world_population( The Item Pipeline takes items scraped by a spider and sends them via a series of components performed in order and handled by Scrapy itself, thus making it easy to load data to the database.Ĭopy and paste the following code into the pipelines.py file. The next step is to load the scraped data to the PostgreSQL database. Until this point, we have successfully developed a spider that is not only scraping the data but transforming it. The information related to the country has been downloaded into the project as a CSV file. Start the spider using the following command: scrapy crawl worldpopulation -o country_data.csvs Save the files, and then use your PowerShell to change the directory to the folder containing the spider. # define the fields for your item here like: Also, copy the following code into items.py. This will extract the data from the website and transform it, which means removing the commas and percentage signs from the data. # FUNCTION TO REMOVE PERCENT SIGN FROM STRING AND CONVERT THE STRING TO FLOAT DATA TYPE # FUNCTION TO REMOVE COMMAS FROM THE STRING AND CHANGE THE STRING INTO INTEGAR DATA TYPE Item= datetime.strptime(response.xpath(f'//table/tbody/tr/td//text()').get()) # SPIDER START SCRAPING FROM 'start_urls' '''CREATED SPIDER NAMED WORLDPOPULATIONSPIDER TO SCRAPE DATA FROM WORLDOMETERS''' import loggingįrom ems import WorldometersItemĬlass WorldpopulationSpider(scrapy.Spider): You can find the complete source code for this project here, or you can paste the following code into your spider file. scrapy genspider worldpopulation Įxtracting & Transforming the Data from the Website: For more information, read the scrapy documentation. Run the following command to create your first spider. To build the scrapy project, use the following command and be sure to follow the instructions: scrapy startproject worldometers Now pip installs the scrapy and psycopg2 libraries in your Venv. Write the following commands in sequence. Now, run the following command: python3 -m venv dependencies Create a new folder named “worldometer-project.” Then, open PowerShell and change the directory to the newly created folder. We’re scraping Pakistan’s country data from the “worldometer” website using scrapy in this article.įirst of all, create a new virtual environment on your local machine. It can be used to get data from APIs or as a general-purpose web crawler. Scrapy is a free, open-source framework for crawling the web.

Web scraping can be intimidating, so I’ll break down the process for ease.īefore starting, you must have installed PostgreSQL and pgAdmin4 on your local machine. This will be a worthwhile project to work on for web scrapers who are interested in gaining an understanding of how to scrape the web and load data into a database. Using pgAdmin4, create a graphical representation of the data.Building an ETL pipeline to load data to the PostgreSQL database.

The following are some of the topics that we will learn in this article After that, we used the data for analytics and for creating business reports. Web scraping is a way to save a lot of time and effort by automatically accessing a website and pulling out vast amounts of information from it.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed